Cyberthreats

Agentic AI security: AI agent risks and how to defend at machine speed

Agentic AI is changing cybersecurity in ways most organisations are not prepared for. On the surface, it looks familiar. Attack automation has existed for years. What is new is the combination of speed, autonomy, and the ability to act inside the systems organisations depend on most.

Employees are increasingly using AI agents alongside the systems they rely on every day: email, collaboration platforms, internal dashboards, CRMs, and ticketing tools. These agents can read messages, draft replies, trigger tasks, and update records across multiple systems. Actions that previously required a person to review each step can now happen automatically.

Most organisations already operate with connected systems, delegated permissions, automated workflows, and employees experimenting with AI to work faster. Agentic AI increases the speed and scale at which these behaviours play out.

For security teams, the challenge is expanding. It is no longer only about whether employees can recognise and report a suspicious message in time. It also includes whether employees understand how agentic AI operates inside their workflows, what those agents can access or trigger across systems, and how attackers could exploit those same workflows.

TL;DR

This article introduces an adaptive defence loop to help security leaders respond to AI-driven attacks as they evolve.Used well, that loop helps organisations:

- Spot new attacks sooner – Identify emerging tactics early through employee reports and threat signals.

- Train against real threats – Turn real attacks into simulations so teams practise spotting current attacker behaviour.

- Reduce human-driven risk by interventions – Strengthen behaviour where employee actions and workflows create the most exposure.

- Prove security is improving – Measure reporting speed and workforce risk across the organisation.

Machine-speed automation is no longer ‘coming soon’

A recent Anthropic report on a cyber-espionage campaign describes AI agents performing roughly 80–90% of tactical operations with very limited human intervention. Agentic AI was used across multiple stages of the attack chain, including reconnaissance, vulnerability analysis, and data processing, rather than just drafting or research. Most organisations, by contrast, are still trying to secure those early use cases.

At the same time, a CrowdStrike report shows how narrow response windows already are, including an average breakout time of 62 minutes, with the fastest recorded attack coming in at under 2 minutes.

Put together, these signals point to a broader shift in risk. Security teams are dealing with attacks that move from initial access to action faster than many review processes can handle, effectively at machine-speed.

As Rob Daly, CTO at SoSafe, explains in his analysis of agent-driven attacks: “Most response models assume there’s a bit of breathing room between signal and consequence. Agent-driven execution reduces that gap.”

In that environment, early employee reporting and centralised triage become critical. When attacks move this quickly, the first useful signal may come from the workforce before security teams see it elsewhere. Employees therefore need to be trained to recognise suspicious AI behaviour in the tools and workflows they use every day. Those early signals can give security teams the minutes needed to interrupt automated actions before they spread across systems.

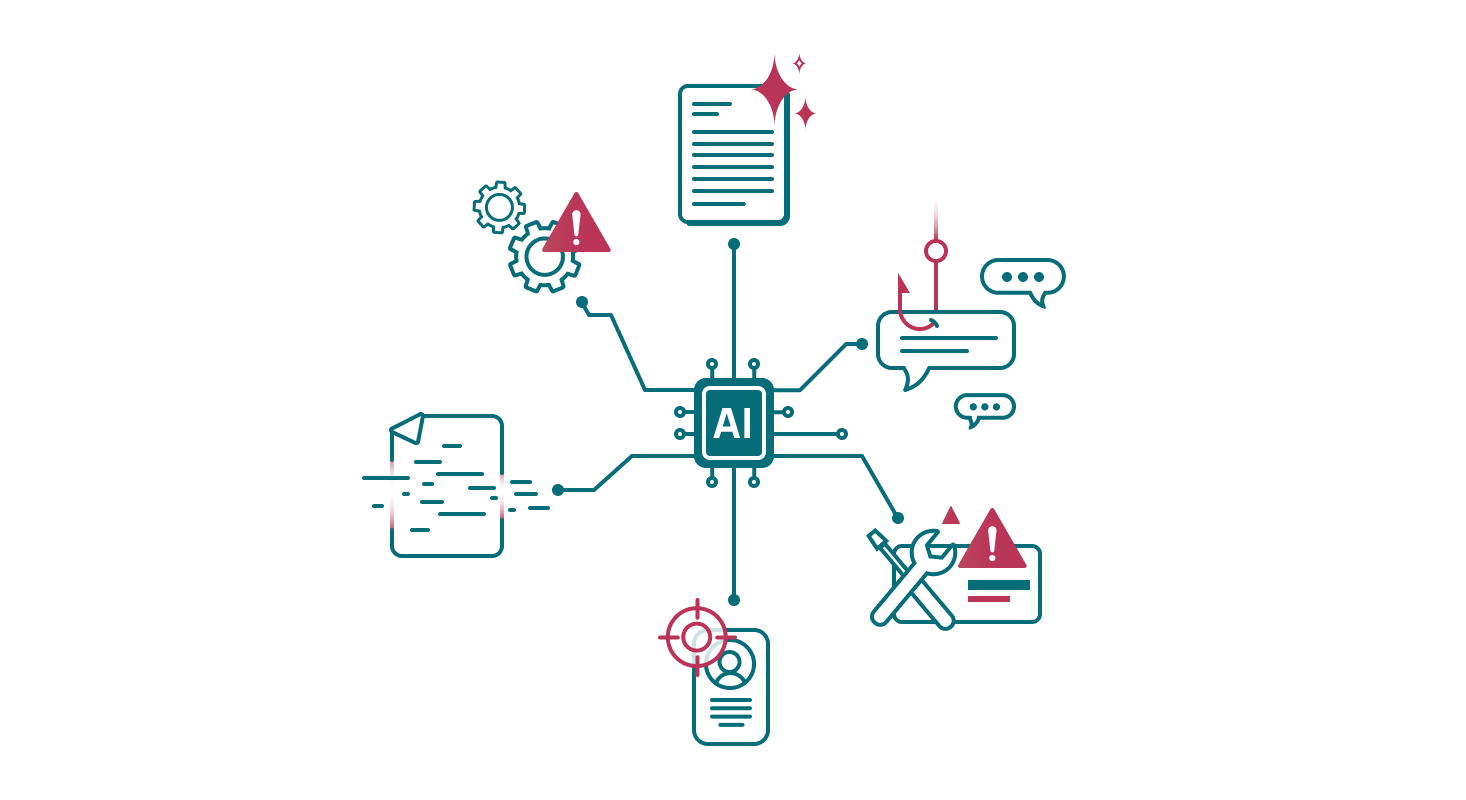

Running crafty agentic AI attacks is becoming easier

Studies on agentic AI in cybersecurity describe agents supporting reconnaissance, vulnerability discovery, and multi-stage intrusions. Unit 42’s agentic AI attack framework shows how insecure design patterns and unsafe tool integrations can accelerate attack execution across agentic workflows.

What is now possible:

- Generate phishing emails and send them at a massive scale.

- Scan networks continuously for exposed services, weak configurations, and leaked credentials.

- Coordinate attacks across email, SMS, and phone.

- Sustain multi-step campaigns over weeks.

- Chain multiple agents together, with one output feeding the next, and more.

You have seen these tactics before. The difference now is scale. Attack attempts increase, campaigns iterate faster, and successful tactics get reused on your people sooner.

“Scaling a cyber campaign used to mean adding more people behind keyboards. Agentic AI appears to remove much of that grind. Attacks that were tedious to run are becoming cheaper to run well.”

– Rob Daly, CTO, SoSafe

When campaigns iterate this quickly, security teams need mechanisms to turn new attack patterns into immediate defensive action. Employees, in turn, must be prepared to recognise how these attacks evolve so they can spot them as they appear, not weeks later.

When helpful AI agents gain too much authority

Many agentic AI security risks start as workflow improvements. An AI agent begins with a narrow task. It proves useful. A permission gets added to remove a handoff. Then another permission follows because the process runs smoother that way. Over time, the agent gains more authority.

The difference is delegation. A copilot assists a person’s action. A delegated agent, on the other hand, acts on its own within the permissions it has been given. That is where permission sprawl becomes a governance problem.

In most environments, agents inherit permission models originally designed for people. If one of those agents is compromised, the impact depends on how much authority it has and how quickly it can act. The activity may still look legitimate because valid credentials are doing the work.

That is why least privilege for agents cannot be an afterthought. It needs to be built into the system from the start. As Rob Daly puts it, it is “a design decision.”

Temporary permissions that become permanent.

Execution rights expanding into decision rights.

View access turning into send, change, or trigger access.

In practice, control usually slips in predictable ways:

These changes accumulate as workflows become more automated and agents take on more responsibility.

In practice, this means focusing behavioural reinforcement where authority and risk intersect: around users with delegated access, elevated permissions, or the ability to trigger operational workflows. These roles influence how actions propagate across systems. Strengthening safe habits at those points helps prevent unsafe automation practices or “shadow AI” behaviours from spreading through everyday workflows.

More decisions, less oversight

People do not make better security decisions when they face a constant stream of operational decisions: alerts to review, approvals to grant, or automated tasks to validate. They struggle even more when they lack visibility into what those actions will do across connected systems, or when it is unclear who has the authority to intervene.Agentic systems intensify these conditions. Agents can generate large volumes of actions or decisions in a short time, leaving teams managing throughput instead of exercising real control. At that point, human review becomes the bottleneck.

“When an agent surfaces 200 decisions an hour, the human becomes the bottleneck. There’s a phase where humans who want to use AI but don’t trust it will suffer.”

– Rob Daly, CTO, SoSafe

This is where reaction-based defence starts to fail. By the time someone reviews the output, the workflow may already have moved forward and the decision may already have taken effect.

A defence model built for slower threats will struggle here. Teams need a defence model that can spot new attack tactics early, help people recognise them quickly, strengthen safer behaviour in the workflows where risk appears, and show whether those efforts are reducing exposure over time.

That is where the four stages of adaptive defence come in.

Adaptive defence loop for agentic AI threats

An effective defence model needs to operate as a continuous loop.

Step 1: Detect

The Detect stage helps security teams spot new attack patterns early enough to act on them while they are still fresh.

“If you see a tactic today, your environment should be harder to exploit tomorrow, not next quarter.”

– Rob Daly, CTO, SoSafe

This often starts with something simple: an employee flags a message that feels off. When those reports are centralised in Threat Inbox, security teams get a live view of the attacks reaching the workforce. That makes it easier to spot emerging social engineering tactics, AI-generated phishing, and multi-channel attack patterns early, while there is still time to investigate, validate, and feed those signals back into defence.

Step 2: Mirror

The Mirror stage keeps security training aligned with how attacks actually look today.

Recreate Attack allows security teams to turn real phishing emails or social engineering attempts into simulations within minutes. Instead of relying on generic templates or outdated scenarios, teams can recreate the exact tactics observed in their environment and use them in awareness programmes almost immediately.

This shortens the gap between detecting a tactic and preparing people for it, so employees learn to recognise the attacks they are most likely to encounter, not the ones attackers used months ago.

Step 3: Intervene

The Intervene stage focuses on the people and workflows where authority is highest.

Employees with delegated access, elevated permissions, or the ability to trigger operational workflows have the greatest influence on how actions propagate across systems. Strengthening behaviour in these roles therefore has the biggest impact on reducing risk.

Part of this means helping teams become familiar with AI tools themselves. People who never use these systems often struggle to recognise what they can do, when their behaviour changes, or when something looks wrong. Building that familiarity helps employees move from basic AI literacy to working confidently alongside agents that assist with everyday tasks.

Targeted simulations and behavioural interventions focus on these high-agency users. They help teams recognise unsafe automation practices, question unusual agent behaviour, and avoid “shadow AI” shortcuts that bypass established controls.

Step 4: Measure

The Measure stage shows whether the defence loop is actually improving resilience.

Security teams track indicators such as reporting speed, mean time to respond (MTTR), behavioural engagement, and workforce risk signals using metrics like the Human Security Index. These metrics show whether employees are acting as early detection sensors and whether response capability improves as new tactics appear.

Together, these stages form an adaptive defence loop: detection reveals new tactics, mirroring turns them into training, interventions strengthen behaviour in the right workflows, and measurement shows whether those changes are reducing risk.

Networking the defence loop

Attackers reuse what works. Defenders need to do the same.

Many organisations still learn from incidents in isolation. Signals arrive slowly, lessons spread slowly, and the same tactics succeed elsewhere.

When reporting, simulation, behavioural intervention, and measurement are connected, a single incident becomes a learning signal. The tactic can be identified, translated into reinforcement and simulation, prioritised for similar users or workflows, and tracked for behavioural change.

When those signals are shared across organisations, each incident strengthens the wider defence network. Over time, the ecosystem as a whole becomes harder to exploit.

Are you still defending at human speed?

Agentic AI changes risk in two directions at once. Attacks become faster, cheaper, and easier to scale, while employees face growing decision volume and hand more control to systems acting on their behalf. Risk no longer sits only at the point of attack. It increasingly forms inside the workflows and permissions that shape everyday work.

The organisations that adapt will not just detect attacks faster. They will build defence loops that learn faster. That is what it takes to stay effective when attacks move faster and authority increasingly shifts to machines.