Cyberthreats

What deepfake social engineering is changing inside your workplace?

Picture the request your finance team would least want to receive at 17:42 on a Friday. It arrives as a voice note or short video message. The context checks out. Nothing about it feels exaggerated. It sounds like a senior stakeholder who wants something handled before the day ends, which leaves the employee deciding whether to verify the request or keep work moving.

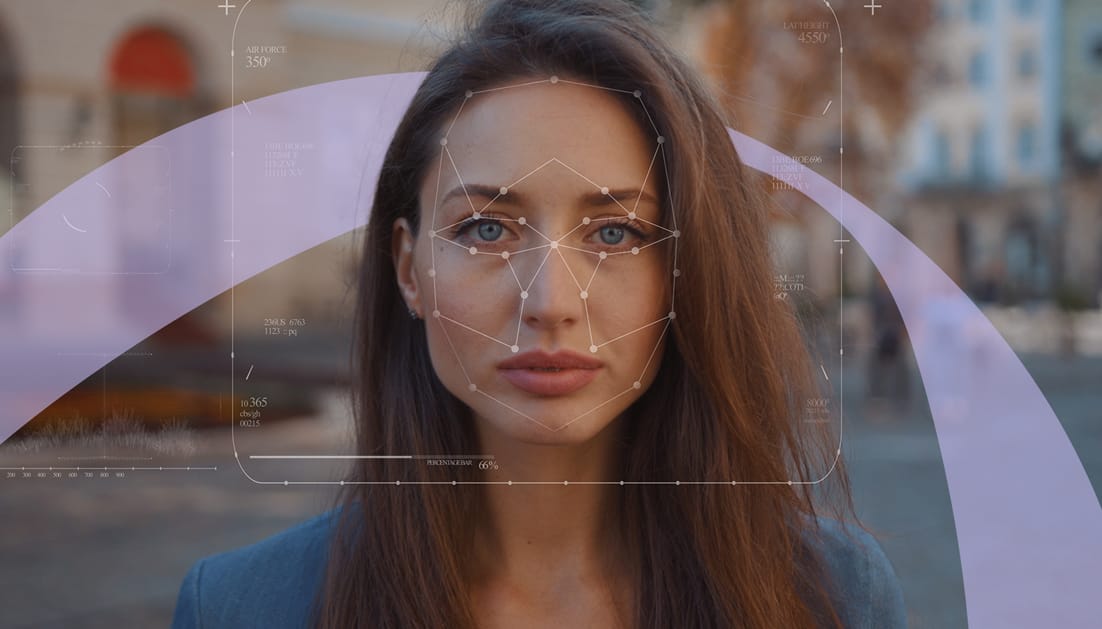

Deepfake social engineering puts pressure on routine decision-making. A request can arrive with the right voice, the right face, and the right level of urgency, which makes it feel credible before anyone has time to slow it down. That shifts the challenge from spotting obvious mistakes to handling trust, authority, and timing inside everyday workflows.

According to SoSafe’s State of AI and Social Engineering 2025 Report, 30% of leaders encountered voice-cloning attempts in 2025, while 33% of organisations had already experienced deepfake incidents.According to the Adaptive Defense Playbook 2026, there has been a 43% increase in deepfakes and voice or video impersonations over the past year(2025) among surveyed security professionals across 9 European markets from organisations in finance, technology, manufacturing, healthcare, professional services, and insurance sectors.

TL;DR

In this article, we look at why defence now depends less on spotting broken language and more on building a culture where pausing, verifying, and escalating should feel routine under pressure.

30% encountered voice-cloning attempts in 2025.

33% of organisations had already experienced deepfake incidents.

43% reported an increase in deepfakes and voice or video impersonations.

(Source: State of AI and Social Engineering 2025 Report, Adaptive Defense Playbook 2026)

How deepfake impersonation puts pressure on everyday judgement

In Europol’s IOCTA report, voice deepfakes are identified as a way to increase the credibility of campaigns linked to business email compromise (BEC) and CEO fraud. That same concern comes through in the ECB summary, where market participants describe a growing number of difficult-to-detect attacks using deepfake emails and voice messages alongside social engineering.

Trust can now be copied into ordinary business interactions with far less friction.

Deepfake impersonation succeeds when it fits neatly into work that already feels familiar. A request tied to a payment, a supplier update, a document review, a password reset, or a quick callback arrives as another item moving through an employee’s day. That makes the first decision more difficult, because the employee is responding to a task that appears to belong.

Even the message does not have to come from a senior leader. It can come from a known colleague, a supplier contact, a recruiter, or anyone else whose role gives the request enough credibility to prompt quick action.Research on human cognition helps explain why these moments are hard to handle well. People make quicker decisions when a request feels relevant, familiar, and socially easy to accept. Deepfake impersonation strengthens those cues inside the workflow itself, which is why the judgement challenge now sits closer to everyday behaviour than to classic phishing detection.

Why security awareness is moving beyond classic phishing cues

A growing number of deepfake incidents are pushing security awareness into new territory. Cases such as Arup’s $25 million deepfake fraud and the attempted scam disclosed by WPP show how trust now moves across voice, video, and collaboration tools, often inside workflows employees already recognise. Arup’s CIO described the incident as psychology plus deepfake technology rather than a traditional systems compromise, which is a useful way to frame the shift.

That shift is showing up in research too. A chapter on the move from security awareness and training to human risk management argues that older awareness models have been constrained by a compliance-led mindset and limited evidence of lasting behaviour change. An article on AI-deepfake scams and the importance of a holistic communication security strategy calls for a broader mix of technical, organisational, and educational measures.

Awareness is becoming less about recognising suspicious content in isolation and more about preparing people to verify, escalate, and stay safe when a convincing deepfake request arrives through voice, video, chat, or email.

The judgement skills security teams need to build in employees next

Employees will need more than recognition skills. They will need practice making sound decisions when a request looks plausible, arrives through a familiar channel, and needs to be checked without slowing work to a halt.

A study on skills-based training found that employees who received iterative practice and feedback improved risk recognition and reporting for up to 12 months compared with awareness-only training. That is a useful benchmark for teams reviewing whether their current approach is actually shaping behaviour or simply delivering content.

Employees need rehearsal across voice, video, messaging, and callback workflows, using the escalation routes they are expected to follow in real work.

| Outdated cue-based training | Skills-based training for AI-enabled impersonation |

| Teaches employees to scan for red flags. | Trains employees to respond well when a request looks plausible. |

| Relies on generic examples. | Uses role-aware scenarios tied to real work. |

| Ends with completion. | Reinforces decisions with feedback and follow-up. |

| Measures participation. | Measures reporting, response quality, and repeated behaviour. |

How a stronger security culture gives employees cover to question

A stronger security culture lowers the social cost of checking a request. When verification feels awkward, political, or unnecessarily disruptive, employees are more likely to stay quiet and move on.

According to the Adaptive Defense Playbook, security culture is still uneven in practice.

- Around 22% of security professionals describe a culture that reinforces secure behaviour through manager ownership, peer support, and feedback.

- While 21% say secure behaviour is sustained through everyday workflows such as verification and reporting.

- Another 21% still rely on more compliance-led, box-ticking approaches, including policy sign-offs.

A study found that leadership behaviour has a meaningful influence on expected security outcomes, with task-oriented leadership showing a direct relationship with compliance outcomes. A review on human factors in cybersecurity also argues for adaptive training, organisational culture, and human-centred design rather than user blame.There is a second issue leaders should watch: overload. A study on cybersecurity fatigue surveyed 351 employees and linked fatigue with stress, burnout, and reduced productivity, while simplified protocols and support strategies helped reduce those effects. If the verification path is slow, vague, or embarrassing, employees will work around it. A stronger security culture makes the secure action the workable one.

How adaptive defence turns one reported signal into a stronger response loop

As deepfakes and other AI-driven impersonation tactics rise, organisations need to adapt at the pace of changing attacks. According to the Adaptive Defense Playbook, 88% of surveyed respondents say they are likely to invest in building an adaptive, behaviour-driven security culture in the coming year (2026).

That shift moves awareness away from periodic content and towards a response loop that learns from live signals, reinforces behaviour in context, and shows whether response is improving over time.

With SoSafe’s adaptive defence model, teams can use Threat Inbox to review and classify reported emails and send feedback to the employee who reported them. Recreate Attack helps turn screenshots of real phishing emails into editable simulations in minutes.

Policy to Lesson converts static policy content into short, interactive learning, while Learn Anywhere makes that reinforcement easier to deliver across devices and languages. Human Risk OS™ then gives security teams clearer visibility into whether reporting, questioning, and escalation are improving while the pattern is still relevant, and gives them stronger evidence to show leadership how the programme is progressing.

The same loop also makes readiness easier to test: which requests remain hardest to challenge, which channels still receive lighter coverage, and which behaviour signals show that response is improving in practice rather than simply being completed?

Adaptive defence connects live signals, current learning, and behavioural evidence in one response model.

See how 1,000 security leaders are benchmarking attack exposure, adaptation speed, and behavioural readiness in practice.

Read the full report