Discover how our phishing simulations turn your employees into active defenders of your organisation.

Human Risk Management, Cyberthreats

How to spot a deepfake

No time to read? Listen instead:

At the beginning of this year, a video was released on Facebook and YouTube in which Ukrainian President Volodymyr Zelensky orders Ukrainian soldiers to ‘lay down their weapons’ and surrender to Russian troops. Although it wasn’t clear at first glance, this was a fake, altered video that hackers placed on the website of Ukraine 24, a national news network, with Zelensky’s message appearing in the scrolling ticker. While this manipulated video was ‘poorly made’, it still shows how deepfake videos have the potential to rapidly spread disinformation. Tarnishing trust in authentic media is another serious byproduct.

But it didn’t start out this way. Brands started using this technology even before the trend spiraled out of control. Back in 2017, an anonymous reddit user shared doctored adult videos featuring famous celebrities like Gal Gadot and Taylor Swift. The trend spread like wildfire and businesses were quick to hop on. One brand enabled users to bring old photographs of deceased relatives to life – with added voice.

Today, the advancement with deepfakes makes you think twice about whether to believe what you see even with your own eyes. Let’s look into what exactly deepfakes are and how organisations and individuals can protect themselves against them.

What is a deepfake?

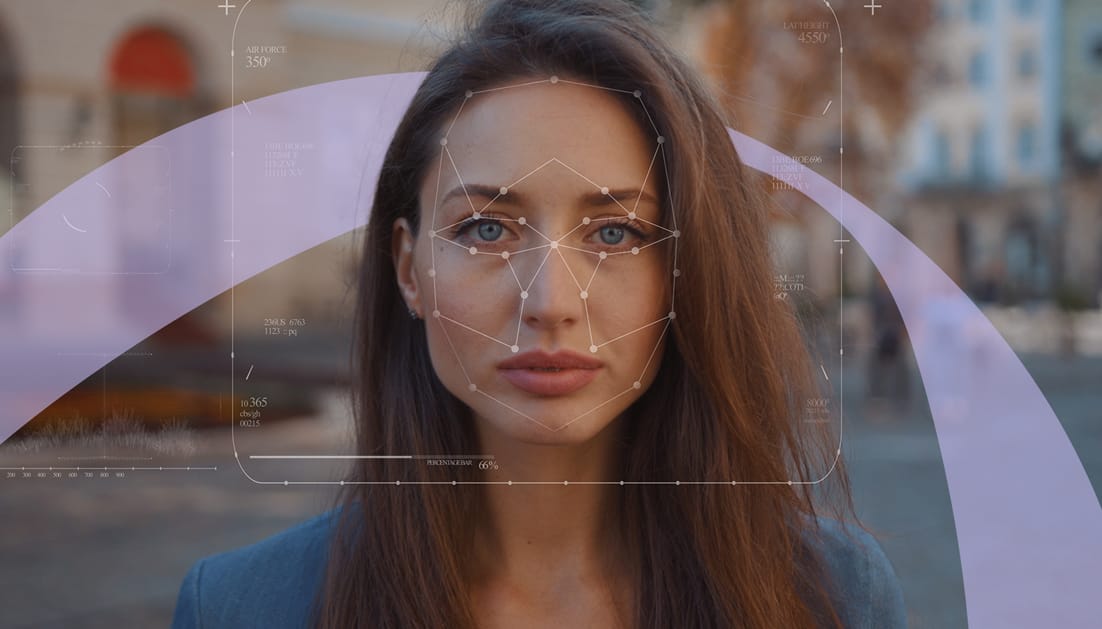

A form of artificial intelligence, called deep-learning, is used to create machine-manipulated video or audio content that deceives people into thinking that those in the video, usually public figures, are the ones talking or performing an action. There are several methods that can be used to create deepfakes, the most popular involves using neural networks with face-swapping techniques. With the technologies behind deepfake getting more sophisticated, mimicking movements and even the specific diction of each individual is more and more believable, which makes realistic-looking media harder to distinguish.

Doubling every six months since 2018, 85 thousand harmful deepfakes were released up to December 2020. It has become evident that deepfakes have the potential to delegitimize reality, and more recently have been ushering in a trend of fake news, unethical political agendas and revenge plots.

Professional deepfakes involve high-end processing systems with powerful graphics and experts performing meticulous clean-ups. Computers are getting smarter at simulating reality and with the added benefit of cloud storage hackers seem to have the best at their disposal. Even those without expertise are now able to create synthetic content with the help of easily accessible apps.

Are deepfakes illegal?

There are many ways deepfakes can be used, ranging from harmless satire, art, or entertainment, to disinformation, adult content, political scandals, fake news, and even modern warfare. Creating deepfakes is not itself an illegal act. However, if they violate the subject’s personal rights or are used for malicious or criminal gains, there can be legal repercussions. The regulatory landscape in the EU against deepfakes is a complex mix of hard and soft rules. Policies such as the GDPR, AI regulatory framework, copyright regime, and the action plan on disinformation, provide general guidance. However, whether forged videos can be used as evidence in a court of law remains challenging to determine. It is imperative that regulations remain responsive to the evolving trends in deepfake technology. From Covid-19, climate change, to the Ukraine war, deepfakes have the potential to harm reputation as easily as it is for them to become a national security risk.

Another concern with the legalities around deepfakes is that there is no universal regulation to provide standard protection yet. Nations are individually tackling this issue, albeit slowly, in response to technological advancements that pose dangers to citizens. Israel, for example, introduced a photoshop law that requires brands to label visuals that have been retouched and may even extend to videos that content creators use that are fabricated. This is already a step in the right direction.

How do deepfakes work?

A large data set including hundreds and thousands of sample photos and videos trains artificial neural networks to recognise whether they are counterfeit or real. Once the neural networks are not able to make the correct distinction, the photo/video is deemed a good target for humans.

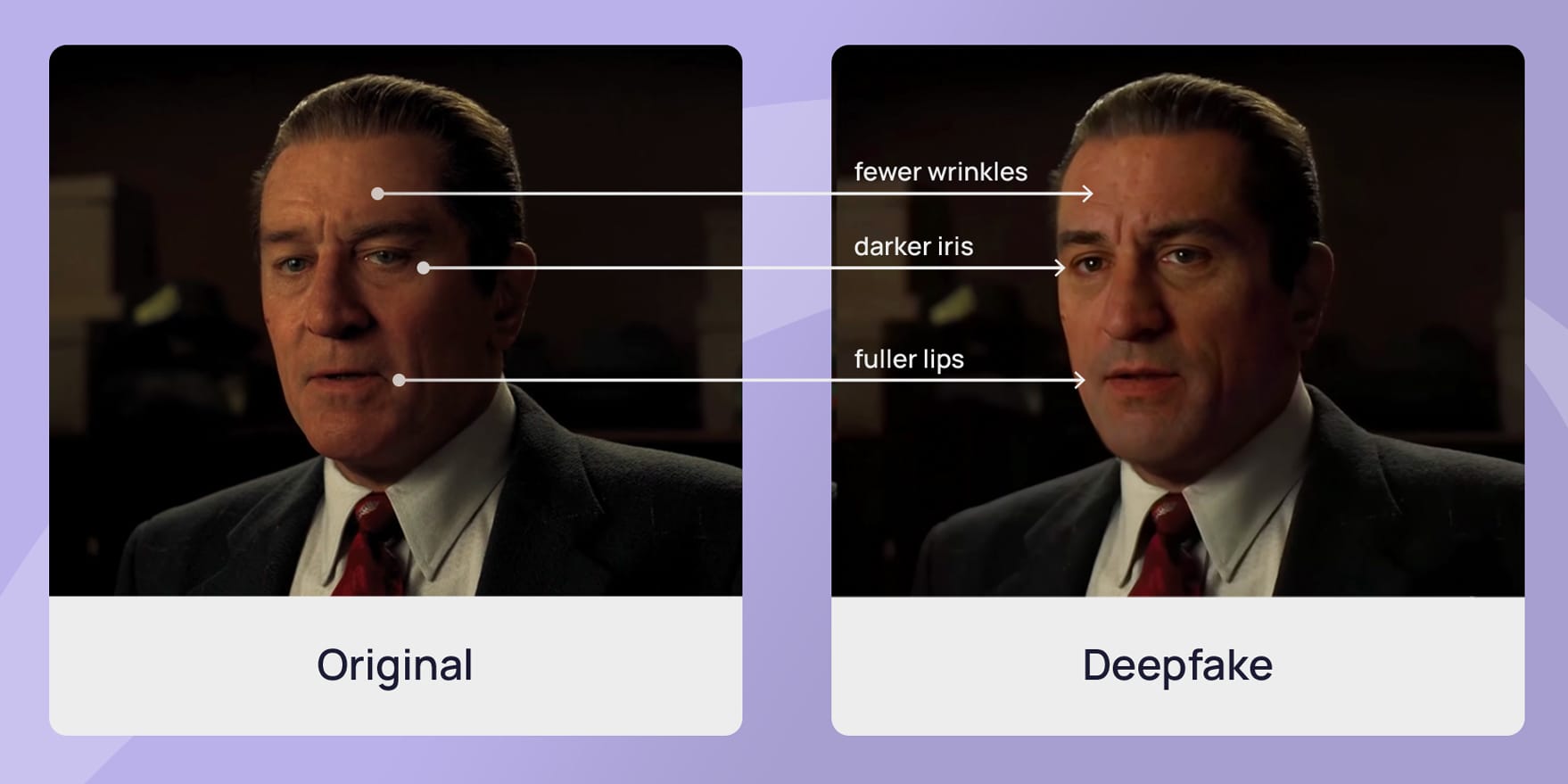

Face swapping, for example, uses a computer-created version of someone’s face. The AI can use these videos to almost perfectly mimic the individual’s facial expressions, and it learns more with each video. The more the AI learns, the more believable the deepfake becomes.

Voice swapping and voice cloning work in a similar fashion: The more audio recordings there are of an individual, the more AI can learn and imitate their voice.

The deepfake technology has become so prevalent that anyone can use apps like FaceApp to generate high-quality deepfakes. This app is mainly used for entertainment, but it makes it possible for even inexperienced users to create strikingly realistic videos of famous individuals with ease.

What is the purpose of deepfakes?

The superimposition of voices and faces gives us the opportunity of taking a composition into realistic or fictional situations. Deepfake has proliferated across borders into industries, professions, businesses and it is slowly seeping through the cracks in between. It has been giving a voice to those behind the scenes or a platform for those who want to be heard. The good, the bad, and the ugly are apparent, appaling, as well as entertaining. It depends which side you’re on.

For example, it is continuing to revolutionize the movie industry as more filmmakers bring a facelift to their actors to re-introduce their younger versions through the de-aging illusion. Other forms of entertainment like parodies, narrations, and e-books are also using deepfakes as a tool for facilitating creative storytelling.

Deepfakes are also helping educators use synthetic media to recreate historial icons to bring life into their teaching, quite literally. Engaging their students with realistic depictions of major events is breathing new life into modern education.

An intelligent tool that is assisting innovation, deepfake technology is here to influence watchers and listeners in believing what they see and hear. From retailers, to criminal investigators, to art curators – deepfakes are amplifying messages on a very visible podium for those who want make a difference.

However, deepfake technology is also a grave cause of fear amongst those who are caught in the crosshairs. Money extortion, fraud, and more recently: fake news. It isn’t new, but has re-surfaced and multiplied to dangerous proportions. Deceiving the public with false, uncredible news bubble-wrapped in virality, fake news is used by criminals and those with vengeful agendas. Deepfakes can serve to be as exploitative as they can be empowering.

Warfare manipulation is currently one the most active trends in the world of deepfakes. Taking a closer look, Russia’s war against Ukraine is being waged digitally, with hackers disabling government websites and spreading disinformation. The fake surrender by Ukrainian President Zelenskyy is a prime example.

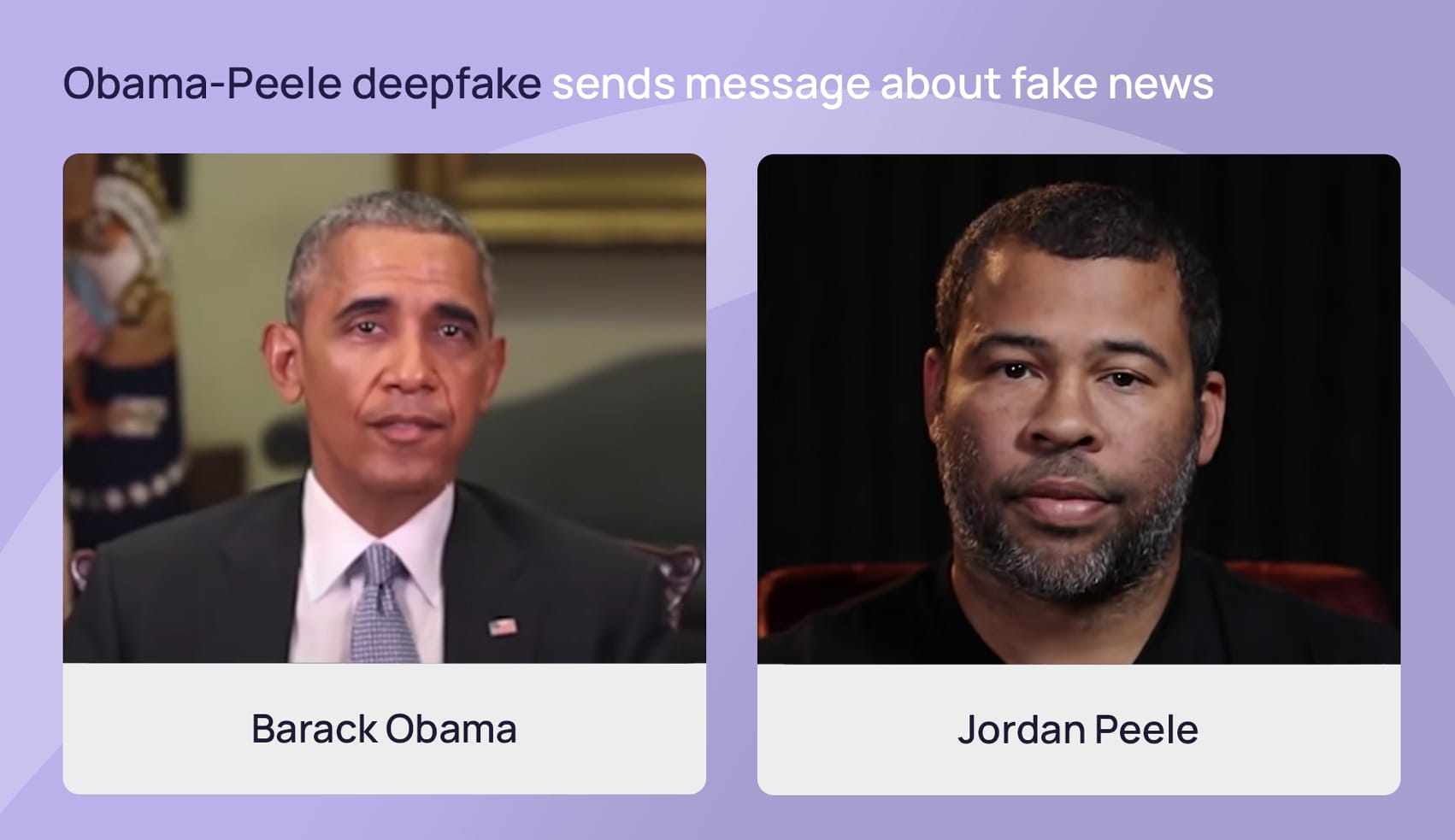

Another clip of American actor Jordan Peele from 2018 in collabouration with BuzzFeed, used deepfake to make the point about fake news heard (and seen) loud and clear. It shows former US President Barack Obama discussing the potential dangers of deepfake videos with Peele’s voice and lip movements overlaid onto Obama. This made it appear, amongst other things, as if Obama was calling former U.S President, Donald Trump a “dipshit”.

Alleged deepfake attacks also caused a stir in Germany when the mayor of Berlin, Franziska Giffey, joined a video conference with Kiev’s mayor Vitali Klitschko to discuss Ukraine war. He spoke for 15 minutes when it was soon discovered that this was not Vitali Klitschko himself, but a “cheapfake” in which manipulated audio was dubbed over existing video. This incident made clear the dangerous extent of video manipulation that imposters attempt.

Infobox: Cheapfakes

Make phishing attacks miss the mark

How can you spot a deepfake?

While it’s becoming increasingly difficult to recognise deepfake videos, cyber security providers are constantly improving their recognition algorithms. In this tug and war of who has a better tech game, it’s often citizens who are caught in the middle. However, you don’t need to be an expert to discern whether the video you are seeing is real.

To begin with, be wary of the source. Trace the video back to where it was posted and by whom. Remember the Tom Cruise video? It was posted by @deeptomcruise on TikTok. Not Tom Cruise. Oftentimes, you need to make a judgement on the legitimacy of the source, if it isn’t already explicit. Whether the source should be a fan account, or a news publication is often a good indication.

Search engines are a powerful tool that can help you dig a little deeper and give you details like how far an image has traveled, unearth counterfeits, or show you where it originated from. Use search engines to do a reverse image check.

- Take a screenshot from the video you think may be a deepfake.

- Upload this image onto a search engine like Google Images or Bing Images.

- Authenticate the image’s history: where it’s been used, other sources who have posted or used this, or if there is another version that exists somewhere.

Fact check yourself. Find this video or story on a credible source elsewhere and compare the versions. Ask yourself whether this video can be true. Don’t share ahead unless you are absolutely sure. Trust your gut feeling but trust reliable sources as well.

Here are some additional cues you can take to make the distinction between a real video and a deepfake:

- Unnatural body movements

The person is erratically and whether the movements from one image to another line up. Are the positions of the head and body uncoordinated? - Odd coloration

Keep an eye on the video color, and on whether the lighting fluctuates from one frame to the next. Ask yourself if The person’s natural skin color seems unusual. Are there shadows where there shouldn’t be? - Strange eye movements

If you notice the person’s eyes moving unnaturally, be cautioned. If their blinking is not following a natural rhythm, that is another sign. Are they blinking at all? - Awkward facial expressions/emotions

One of the first obvious things to take note of is their facial expressions. See if their face or nose is pointing another direction or if something doesn’t look right. Does their face not match the emotions according to the conversation? - Unnatural teeth/hair

AI is not the most advanced when it comes to teeth. If you cannot make out individual teeth on them, or they appear to have perfect hair without any flyaway, that’s a sign to watch out for. Is there no single stray hair in sight? - Inconsistent audio

Deepfake creators are more focused on perfecting the video, so be wary of strange audio. Pay attention to the person’s mouth and whether what they are saying looks genuine. Can you hear unusual background noises, or is there no audio at all? - Blurry visual alignment

The most striking element will be what you see. Be mindful of the edges of the speaker and whether they appear out of focus? If the image is not correctly aligned according to the person’s posture, that’s your hint. Does the video contain frames that seem unnatural?

How dangerous are deepfakes for companies?

Because deepfakes are so difficult to tell apart from their original counterparts, they are extremely popular among cybercriminals. Politicians, celebrities, and companies are common targets. For example, cybercriminals can create videos in which the CEO of a company is making controversial statements about confidential or sensitive issues. The financial and reputational damage that this can cause, if undetected, is immense and often irreversible.

In 2020, cybercriminals stole 35 million dollars from a bank in Dubai by contacting one of the directors with a fake phone call. The criminals used voice swapping to pose as the CEO of a major company who had an account with the bank. The director believed the call was genuine judging by the realistic audio and approved the 35-million-dollar transaction. Such incidents that involve money being the central motive are reminiscent of ransomware attacks and “big game hunting in which cybercriminals often dupe organisations for large sums of money. Cases like this show us how dangerous deepfakes can be not just for individuals, but for larger corporations as well.

Staying clear of digital deceit

Superimposing faces, adding fake audio, creating fake scenarios may have started small but today have disrupted the world with dangerous consequences. The accuracy, speed, and novelty of deepfakes continues to escalate at terrifying speeds. What was then an expert’s foul play is today child’s play. The access and reach of AI technology to create deepfakes is the beginning of a great digital disorder. One we must be very, very cautious of.

The quality of deepfake videos has become frighteningly accurate as technology advances and algorithms improve. This is why the human factor – in conjunction with technology – is crucial for better recognizing deepfakes. Basic awareness of how to spot the indicators of falsified content can eliminate many risks in the early stages itself.

While the tips listed above can help recognise deepfakes, they unfortunately are not enough to be 100% protected. Organizations can improve their security by using both technical precautions and cyber security awareness training, like that offered by SoSafe, to make their employees more aware of potentially dangerous content and train them to better respond to them. Additionally, SoSafe offers a specialized lesson on deepfakes, equipping organisations with the knowledge and tools to identify and mitigate the risks associated with this sophisticated digital fraud. This training is crucial in empowering employees to discern between genuine and manipulated content, thereby enhancing an organisation’s overall security posture against emerging cyber threats.